Hi! I am currently a final year PhD Student at the Institute for Adaptive and Neural Computation and part of the APRIL research lab in Edinburgh, supervised by Dr. Antonio Vergari.

I am a machine learning researcher and engineer with a strong background in mathematics, deep generative models, knowledge graphs, neurosymbolic methods, and LLMs. I am experienced with developing and training custom-built machine learning methods, as well as gluing existing methodologies together into new working systems.

Contact:

Useful links:

Curriculum Vitae

Publications

For a complete list refer to Semantic Scholar or Google Scholar.

* = Shared first authorship.

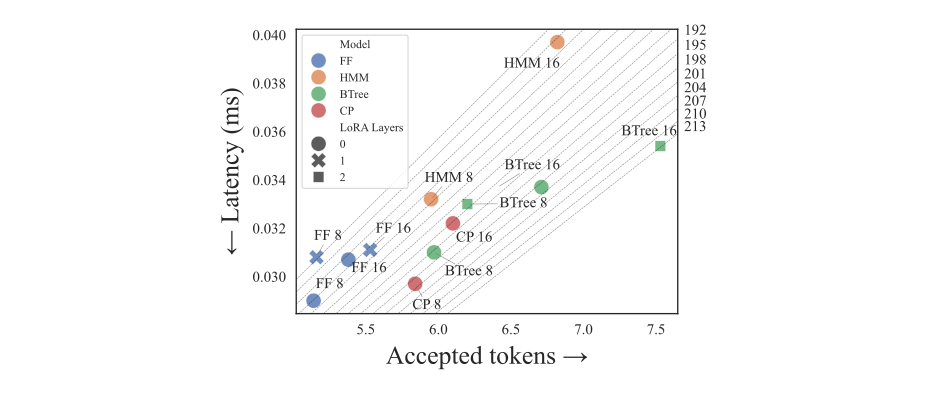

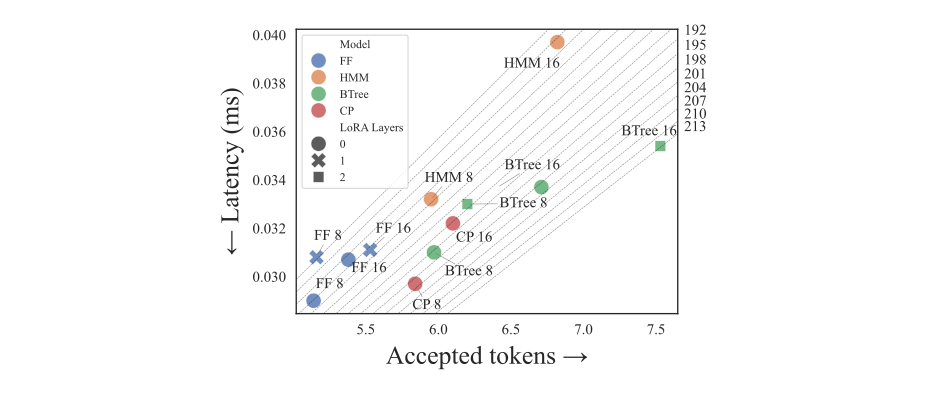

tl;dr: We propose a framework for multi-token prediction using speculative decoding, allowing us to easily explore different parameterizations balancing expressiveness and efficiency, and generalize other approaches based on tensor factorizations.

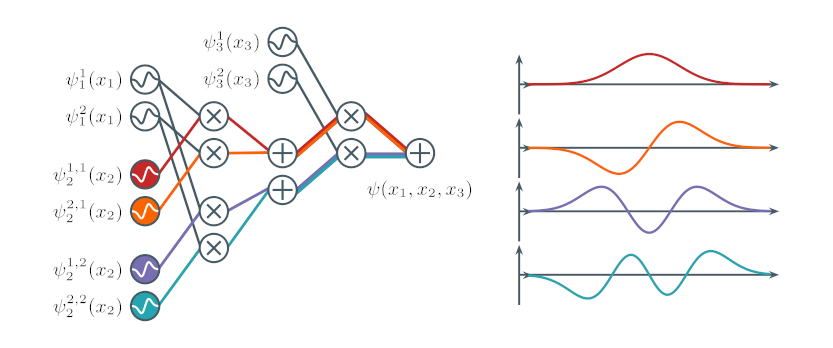

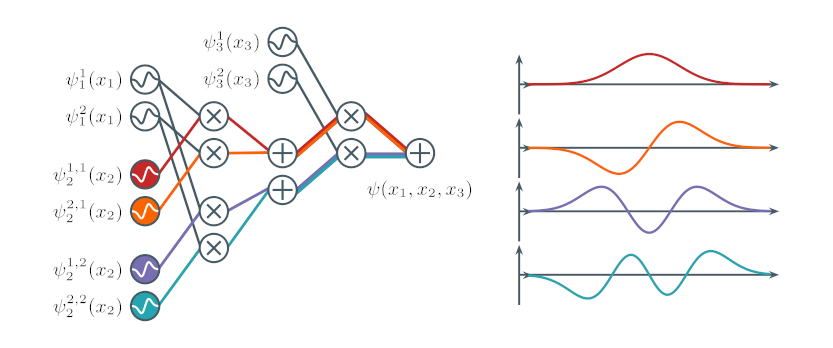

tl;dr: We derive novel circuit properties based on orthogonality to speed-up marginalization in squared circuits and tensor network-based Born machines, as well as to unlock a strictly larger set of factorization structures enabling tractable marginalization

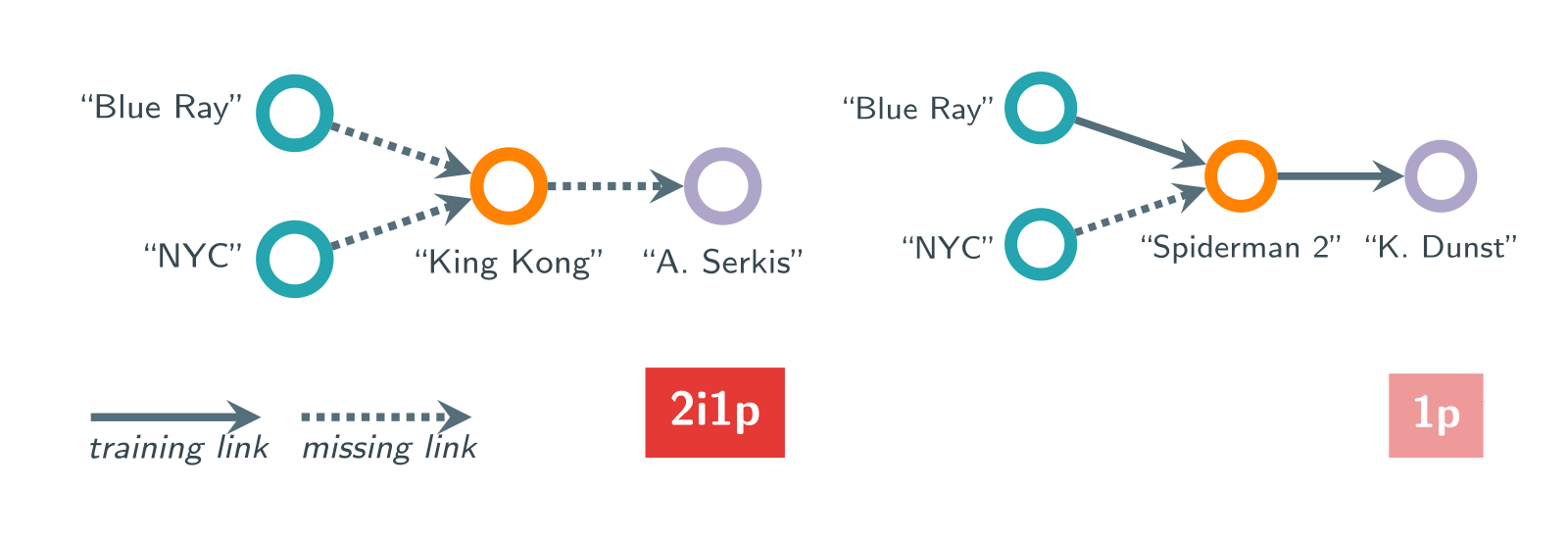

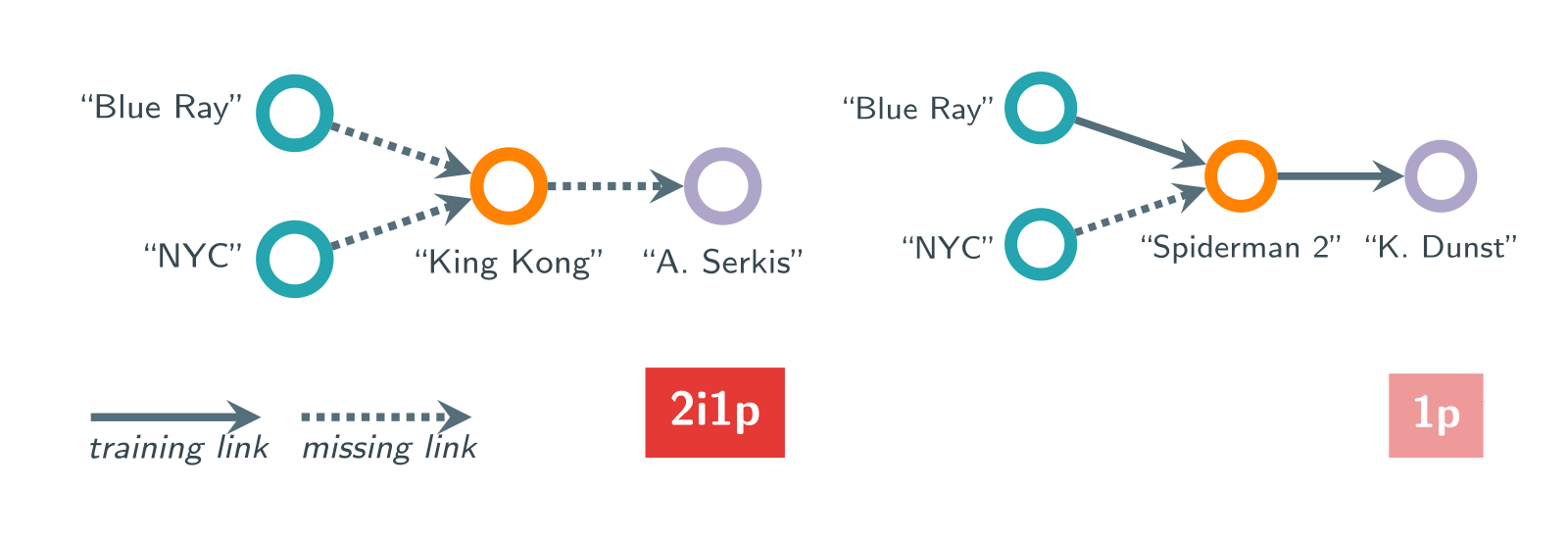

tl;dr: We highlight how common benchmarks for complex query answering with neural models are skewed towards "simple" queries and propose new more challenging benchmarks that solve this issue.

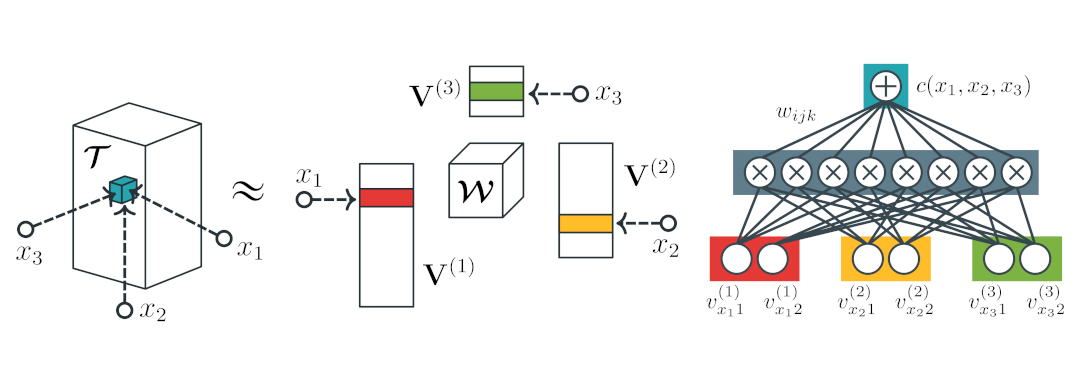

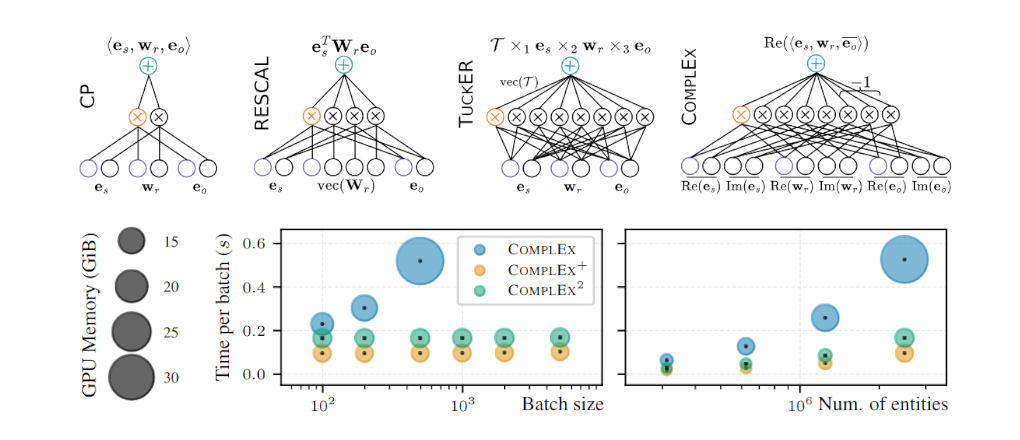

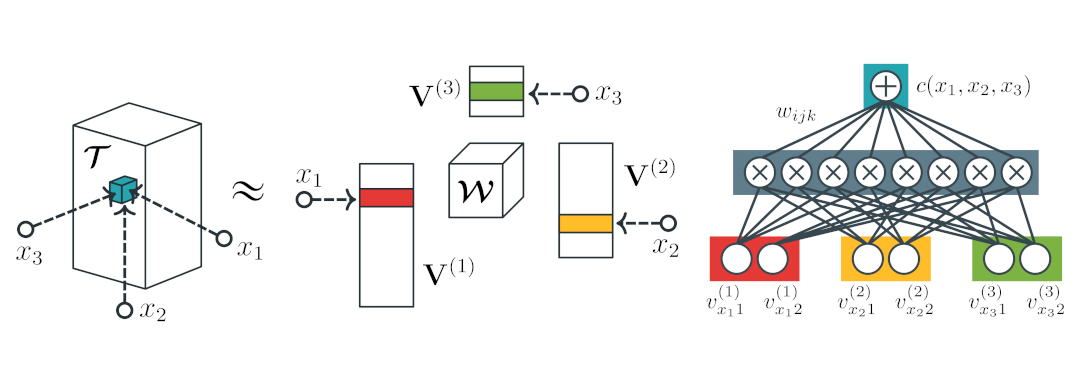

tl;dr: We investigate the connections between tensor factorizations and circuits, and how the literature of the foremost can benefit from the theory about the latter. We then devise a framework to build tensor factorizations and circuits that abstracts away from the many available options.

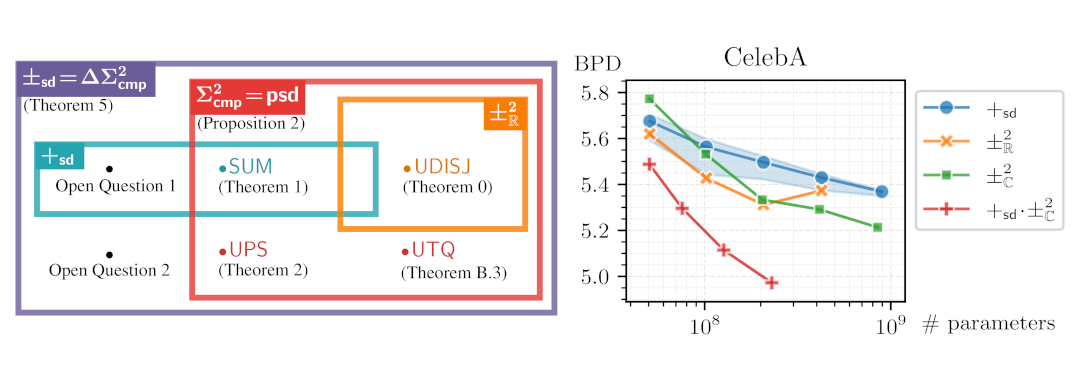

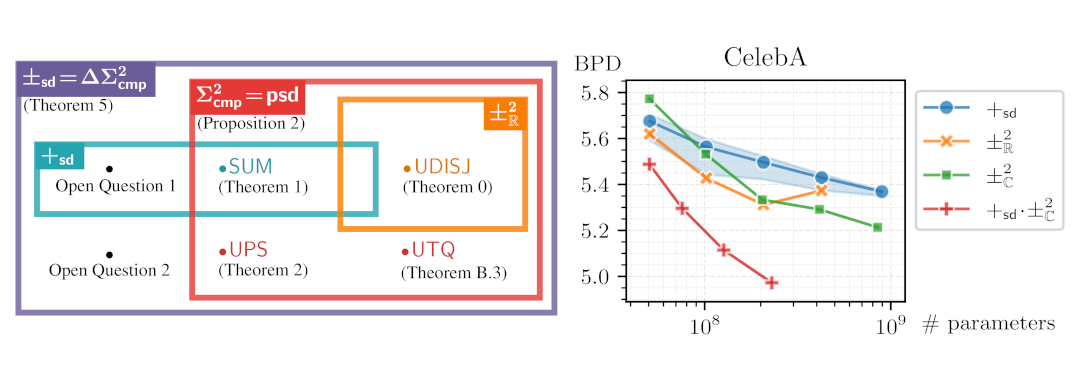

tl;dr: We theoretically prove an expressiveness limitation of deep subtractive mixture models learned by squaring circuits. To overcome this limitation, we propose sum of squares circuits and build an expressiveness hierarchy around them, allowing us to unify and separate many tractable probabilistic models.

tl;dr: We propose to build (deep) subtractive mixture models by squaring circuits. We theoretically prove their expressiveness by deriving an exponential lowerbound on the size of circuits with positive parameters only.

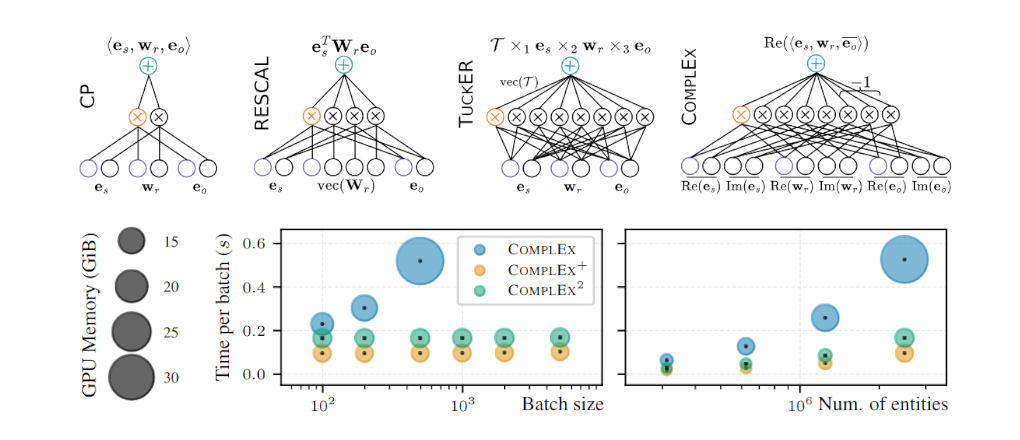

tl;dr: KGE models such as CP, RESCAL, TuckER, ComplEx can be re-interpreted as circuits to unlock their generative capabilities, scaling up learning and guaranteeing the satisfaction of logical constraints by design.

Software

Here is some software I have contributed to. Check out also my GitHub profile.

-

tl;dr: A language and framework for building, learning and reasoning about probabilistic machine learning models, such as circuits and tensor networks.

features: Support for tractable probabilistic inference operations that are automatically compiled to efficient computational graphs that run on the GPU, called circuits. Seamless integration of circuits with deep learning models and with any device compatible with PyTorch. Support for user-defined layers and parameterizations that extend the symbolic circuit language. A set of templates for constructing circuits by mixing layers and structures with just a few lines of code.

cirkit by The april Lab (GPL-3.0).

cirkit by The april Lab (GPL-3.0).